The basic

quantity in the formalism is a local information (in space), based on the

observation of the states at one side (either left or right) of the position of

interest. From the internal statistics in the spatial pattern a local

conditional probability is formed, which will then define a local information

quantity as follows. In information terms one would expect that when a local

less common configuration is encountered at a certain position i in the sequence of cells, the conditional probability for that

configuration given the n-length symbol

sequence, for example, in the cells to the left of it will be relatively small.

This implies a high local information of that conditional probability,

![]() , (1)

, (1)

where zi denotes the symbol at position i,

see [Helvik et al, 2007]. A corresponding local

information ![]() conditioned on

the n cells to the right of the position i is similarly defined. Such a local information quantity has a

spatial average that can be written

conditioned on

the n cells to the right of the position i is similarly defined. Such a local information quantity has a

spatial average that can be written

![]() . (2)

. (2)

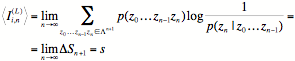

By using the

ergodicity theorem, one can conclude that in average the local information

equals the entropy s of the system,

. (3)

. (3)

For details of

this derivation involving information theory for symbol sequences, see the

lecture notes [Lindgren, 2008]. A similar expression holds for the

corresponding right-sided quantity ![]() . We can then define a local information as an average

between the two,

. We can then define a local information as an average

between the two,

![]() , (4)

, (4)

This means that

the local information Ii,n, as well

as the left- and right-handed versions, has a spatial average that equals the

entropy of the system. In the limit of an infinitely long conditioned sequence,

we define the local (left-sided) entropy sL(i) at position i,

![]() , (5)

, (5)

and the

right-sided counterpart is similarly defined.